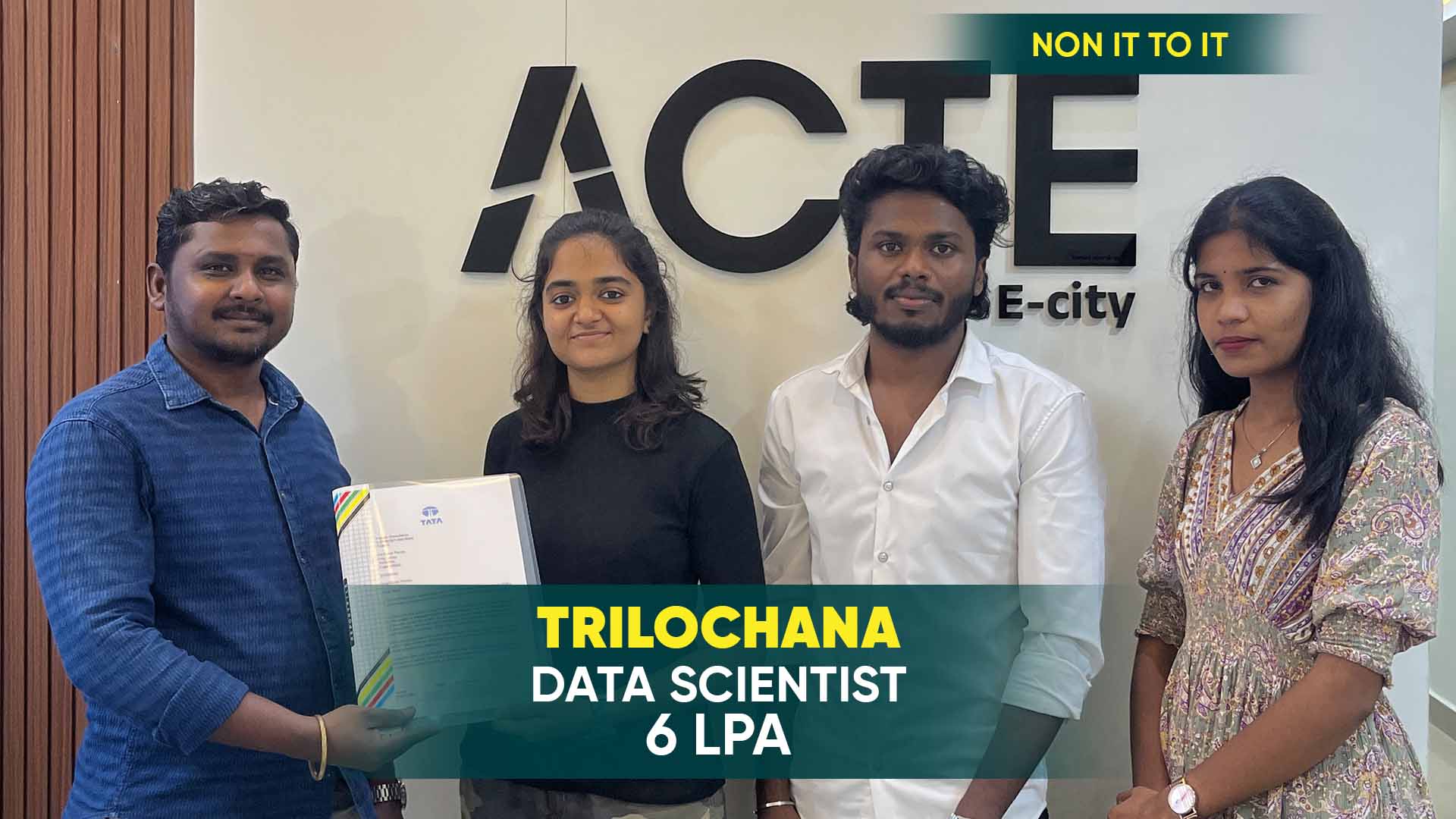

550+ Students Placed Every Month Be The Next!

Our Hiring Partners

Curriculum Designed By Experts

Expertly designed curriculum for future-ready professionals.

Industry Oriented Curriculum

An exhaustive curriculum designed by our industry experts which will help you to get placed in your dream IT company

-

30+ Case Studies & Projects

-

9+ Engaging Projects

-

10+ Years Of Experience

Hadoop Training Projects

Become a Hadoop Expert With Practical and Engaging Projects.

- Practice essential Tools

- Designed by Industry experts

- Get Real-world Experience

Word Count Using MapReduce

This classic beginner project involves writing a MapReduce program to count the occurrence of words in a text file. It helps in understanding the fundamental concepts of Hadoop, including HDFS and the MapReduce framework.

Log File Analysis with Apache Pig

In this project, learners analyze large-scale log files using Apache Pig to extract meaningful insights, such as error frequency, user behavior, and system performance. This project helps in understanding data flow scripting.

Movie Ratings Analysis

This project involves analyzing a dataset containing movie ratings and user preferences using Apache Hive. It introduces learners to data warehousing concepts, querying large datasets with HQL, and partitioning strategies.

Clickstream Data Analysis

This project involves analyzing website clickstream data to gain insights into user behavior and preferences. Learners use Hive and HDFS to process and store data while applying aggregations and filters.

Stock Market Data Processing

In this project, learners use Apache Spark to process stock market data, analyze price trends, and generate reports. It provides exposure to real-time data processing and Spark’s fast computation capabilities.

Customer Segmentation Using HBase

Learners use Apache HBase to store and retrieve customer transaction data to perform customer segmentation based on purchasing behavior. This project provides exposure to NoSQL databases and real-time querying.

IoT Data Processing

Learners work with IoT sensor data to analyze trends and detect anomalies using Apache Spark and Hadoop. This project gives exposure to handling large-scale real-time data from IoT devices.

Weather Data Analysis

In this project, learners work with historical weather data to predict climate patterns. The project involves data processing with Hive, HDFS storage, and visualization using BI tools.

Real-Time Fraud Detection System

This project involves using Hadoop, Spark Streaming, and Kafka to build a fraud detection system that analyzes real-time transactions and detects anomalies based on predefined rules.

Career Support

Placement Assistance

Exclusive access to ACTE Job portal

Mock Interview Preparation

1 on 1 Career Mentoring Sessions

Career Oriented Sessions

Resume & LinkedIn Profile Building

Key Features

Practical Training

Global Certifications

Flexible Timing

Trainer Support

Study Material

Placement Support

Mock Interviews

Resume Building

Upcoming Batches

What's included

Free Aptitude and

Technical Skills Training

Free Aptitude and

Technical Skills Training

- Learn basic maths and logical thinking to solve problems easily.

- Understand simple coding and technical concepts step by step.

- Get ready for exams and interviews with regular practice.

Hands-On Projects

Hands-On Projects

- Work on real-time projects to apply what you learn.

- Build mini apps and tools daily to enhance your coding skills.

- Gain practical experience just like in real jobs.

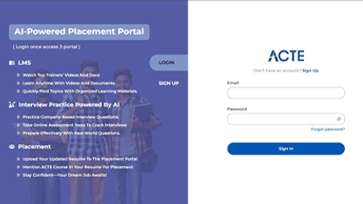

AI Powered Self

Interview Practice Portal

AI Powered Self

Interview Practice Portal

- Practice interview questions with instant AI feedback.

- Improve your answers by speaking and reviewing them.

- Build confidence with real-time mock interview sessions.

Interview Preparation

For Freshers

Interview Preparation

For Freshers

- Practice company-based interview questions.

- Take online assessment tests to crack interviews

- Practice confidently with real-world interview and project-based questions.

LMS Online Learning

Platform

LMS Online Learning

Platform

- Explore expert trainer videos and documents to boost your learning.

- Study anytime with on-demand videos and detailed documents.

- Quickly find topics with organized learning materials.

- Learning strategies that are appropriate and tailored to your company's requirements.

- Live projects guided by instructors are a characteristic of the virtual learning environment.

- The curriculum includes of full-day lectures, practical exercises, and case studies.

Hadoop Training Overview

Career Paths for Hadoop Training in Velachery

A career in Hadoop Training Center in Velachery offers numerous opportunities for professionals in data engineering, big data analytics, and cloud computing. As businesses increasingly rely on data-driven decision-making, the demand for skilled Hadoop programmers is growing. One of the most common roles is Big Data Engineer, responsible for developing and maintaining large-scale data processing systems using Hadoop and its ecosystem components like MapReduce, Hive, and Spark. Another career path is Data Analyst, where professionals extract valuable insights from massive datasets using Hadoop tools such as Pig and Hive. For those interested in machine learning, Data Scientists often leverage Hadoop’s distributed computing power to process and analyze large datasets. Organizations also require Hadoop Administrators, who manage the infrastructure, optimize cluster performance, and ensure the security of Hadoop environments.

The Requirements for Hadoop Training Course in Velachery

- Basic Programming Knowledge:While Hadoop itself is not a programming language, it is built on Java. Having familiarity with Java, Python, or Scala can be beneficial when working with Hadoop components such as MapReduce and Spark.

- Understanding of Linux and Command Line:Since Hadoop primarily runs on Linux-based systems, understanding basic Linux commands and shell scripting is essential.

- Database and SQL Knowledge:Many Hadoop tools, such as Apache Hive and Apache Impala, use SQL-like query languages. Having knowledge of relational databases, SQL queries, and indexing can help professionals analyze large datasets effectively.

- Basics of Distributed Systems :Hadoop is a distributed computing framework designed to process large datasets across multiple nodes. Cluster management, Fault tolerance, Parallel processing, Data replication, Load balancing

- Knowledge of Data Processing Frameworks:Hadoop is not just about HDFS and MapReduce. The Hadoop ecosystem includes various tools that enhance big data processing.

Program Enrolling in Hadoop Training in Velachery

Hadoop Placement class in Velachery has revolutionized the way businesses process and manage large-scale data, making it a must-have skill for data professionals. One of the main reasons to learn Hadoop is its high demand in the job market. Organizations across industries, including finance, healthcare, e-commerce, and telecommunications, are leveraging Hadoop to manage and analyze massive datasets. This creates a continuous need for skilled Hadoop professionals who can develop, maintain, and optimize big data solutions. Another compelling reason is Hadoop’s versatility. It supports various applications, including real-time analytics, machine learning, fraud detection, and recommendation systems. As a result, professionals with Hadoop expertise can explore diverse career opportunities, ranging from big data engineering to data science.

Techniques and Trends Hadoop Development Course in Velachery

- Integration with Cloud Platforms :Organizations are shifting to cloud-based Hadoop solutions such as AWS EMR, Azure HDInsight, and Google Cloud Dataproc for scalability and cost efficiency.

- Real-Time Data Processing: Tools like Apache Spark and Flink are increasingly used with Hadoop to process data in real-time instead of batch processing.

- AI and Machine Learning with Hadoop:Big data frameworks like Apache Mahout and TensorFlow integrate with Hadoop for advanced AI and ML applications.

- Data Security Enhancements :Hadoop security tools like Apache Ranger and Knox help protect sensitive data and ensure compliance with regulations like GDPR.

- Edge Computing and IoT Integration:Hadoop is used in IoT applications where massive amounts of data from connected devices need to be processed efficiently.

- Data Governance and Metadata Management:Apache Atlas and other metadata management tools are becoming essential for managing large-scale Hadoop environments.

The Most Recent Hadoop Coaching in Velachery with Tools

Hadoop continues to evolve, with new tools and enhancements improving big data processing efficiency. Apache Spark remains a dominant tool in the ecosystem, offering lightning-fast in-memory processing compared to traditional MapReduce. Apache Flink is also gaining popularity for real-time stream processing, making it useful for IoT and live analytics.For improved data querying, Apache Hive and Presto are widely used to execute SQL-like queries on massive datasets stored in Hadoop. Apache Kudu provides an efficient columnar storage system that complements HDFS, making it useful for high-speed analytics. Meanwhile, Apache Airflow is emerging as a powerful workflow automation tool for orchestrating Hadoop data pipelines.In terms of data ingestion, Apache NiFi simplifies data flow automation, while Apache Kafka remains the preferred tool for real-time data streaming.

Career Opportunities After Hadoop Training

Hadoop Developer

A Hadoop Developer is responsible for designing, developing, and maintaining big data applications using the Hadoop ecosystem.They write complex MapReduce programs, work with HDFS for data storage.

Big Data Engineer

A Big Data Engineer builds and manages large-scale data pipelines, ensuring efficient data ingestion, transformation, and storage.They work with distributed computing frameworks like Hadoop, Spark.

Hadoop Administrator

A Hadoop Administrator is responsible for installing, configuring, and maintaining Hadoop clusters. They monitor system performance, ensure security, manage backups, and troubleshoot issues.

Data Analyst

A Hadoop Data Analyst extracts valuable insights from massive datasets stored in Hadoop. They use tools like Hive, Impala, and Spark SQL to write queries, analyze trends, and create data visualizations.

Machine Learning Engineer

A Machine Learning Engineer specializing in Hadoop works on AI-driven solutions that require large-scale data processing. They use Hadoop and Spark to clean, preprocess, and analyze training data.

Docker Curriculum Developer

A Cloud Data Engineer focuses on implementing Hadoop-based solutions in cloud environments like AWS, Azure, or Google Cloud. They work with cloud-native services such as AWS EMR, Google Cloud Dataproc.

Skill to Master

Hadoop Ecosystem Understanding

HDFS Management

MapReduce Programming

Apache Hive & SQL Queries

Apache Pig Scripting

Apache Spark for Real-Time Processing

Data Ingestion with Sqoop and Flume

Hadoop Cluster Management

Cloud Integration with Hadoop

Data Security & Access Control

NoSQL Databases & Hadoop Integration

Real-World Big Data Project Execution

Tools to Master

Apache Hadoop

Apache Spark

Apache Hive

Apache Pig

Apache HBase

Apache Sqoop

Apache Flume

Apache Oozie

Apache Zookeeper

YARN

Cloudera Manager

Apache Ambari

Learn from certified professionals who are currently working.

Training by

Sharmila, having 7 yrs of experience

Specialized in: Hadoop Architecture, Big Data Processing, Distributed Computing, and Cloud Data Solutions.

Note: Sharmila is an expert in designing large-scale Hadoop clusters and optimizing data workflows. She has trained professionals in big data analytics and helped enterprises transition to Hadoop-based solutions efficiently.

Premium Training at Best Price

Affordable, Quality Training for Freshers to Launch IT Careers & Land Top Placements.

What Makes ACTE Training Different?

Feature

ACTE Technologies

Other Institutes

Affordable Fees

Competitive Pricing With Flexible Payment Options.

Higher Fees With Limited Payment Options.

Industry Experts

Well Experienced Trainer From a Relevant Field With Practical Training

Theoretical Class With Limited Practical

Updated Syllabus

Updated and Industry-relevant Course Curriculum With Hands-on Learning.

Outdated Curriculum With Limited Practical Training.

Hands-on projects

Real-world Projects With Live Case Studies and Collaboration With Companies.

Basic Projects With Limited Real-world Application.

Certification

Industry-recognized Certifications With Global Validity.

Basic Certifications With Limited Recognition.

Placement Support

Strong Placement Support With Tie-ups With Top Companies and Mock Interviews.

Basic Placement Support

Industry Partnerships

Strong Ties With Top Tech Companies for Internships and Placements

No Partnerships, Limited Opportunities

Batch Size

Small Batch Sizes for Personalized Attention.

Large Batch Sizes With Limited Individual Focus.

LMS Features

Lifetime Access Course video Materials in LMS, Online Interview Practice, upload resumes in Placement Portal.

No LMS Features or Perks.

Training Support

Dedicated Mentors, 24/7 Doubt Resolution, and Personalized Guidance.

Limited Mentor Support and No After-hours Assistance.

We are proud to have participated in more than 40,000 career transfers globally.

HadoopCertification

Yes, many certification providers offer online exams for Hadoop training.

Real-world experience is a strict requirement for obtaining a Hadoop certification, but it adds significant value. Certification exams mainly test theoretical knowledge, concepts, and problem-solving skills in a structured format.

A Hadoop certification validates your expertise in handling big data processing frameworks and distributed computing. The certification proves that you are proficient in working with tools like HDFS, MapReduce, Spark, Hive, and Pig.

Most Hadoop certification exams do not have strict prerequisites, but having a background in programming, Linux, and databases is beneficial. Knowledge of SQL, Python, or Java can help in understanding data processing concepts.

Yes, ACTE’s Hadoop Training Certification is highly valuable for individuals looking to advance their careers in big data and analytics. The certification ensures that you receive in-depth knowledge of Hadoop architecture, data processing frameworks, and distributed computing techniques.

Frequently Asked Questions

- Yes, ACTE provides a demo session to help you understand the course structure.

- The demo session covers an introduction to Hadoop, training methodologies, and interaction with instructors.

- ACTE instructors are experienced professionals with extensive knowledge in big data and Hadoop technologies. They bring years of industry experience in handling real-time projects using tools like Hadoop, Spark, Hive, and Pig.

- Yes, ACTE provides dedicated placement support to help students secure jobs after completing the Hadoop training. The placement assistance includes resume-building sessions, mock interviews, and access to a network of hiring partners.

- Upon successful completion, you will receive an industry-recognized Hadoop certification.

- This certification validates your expertise in HDFS, MapReduce, Apache Spark, Hive, and other big data technologies.

- Yes, the Hadoop course includes hands-on training with real-world projects. Students get to work on case studies, data processing pipelines, and large-scale analytics applications. These projects help learners understand how Hadoop is used in industries such as banking, healthcare, and e-commerce.

)