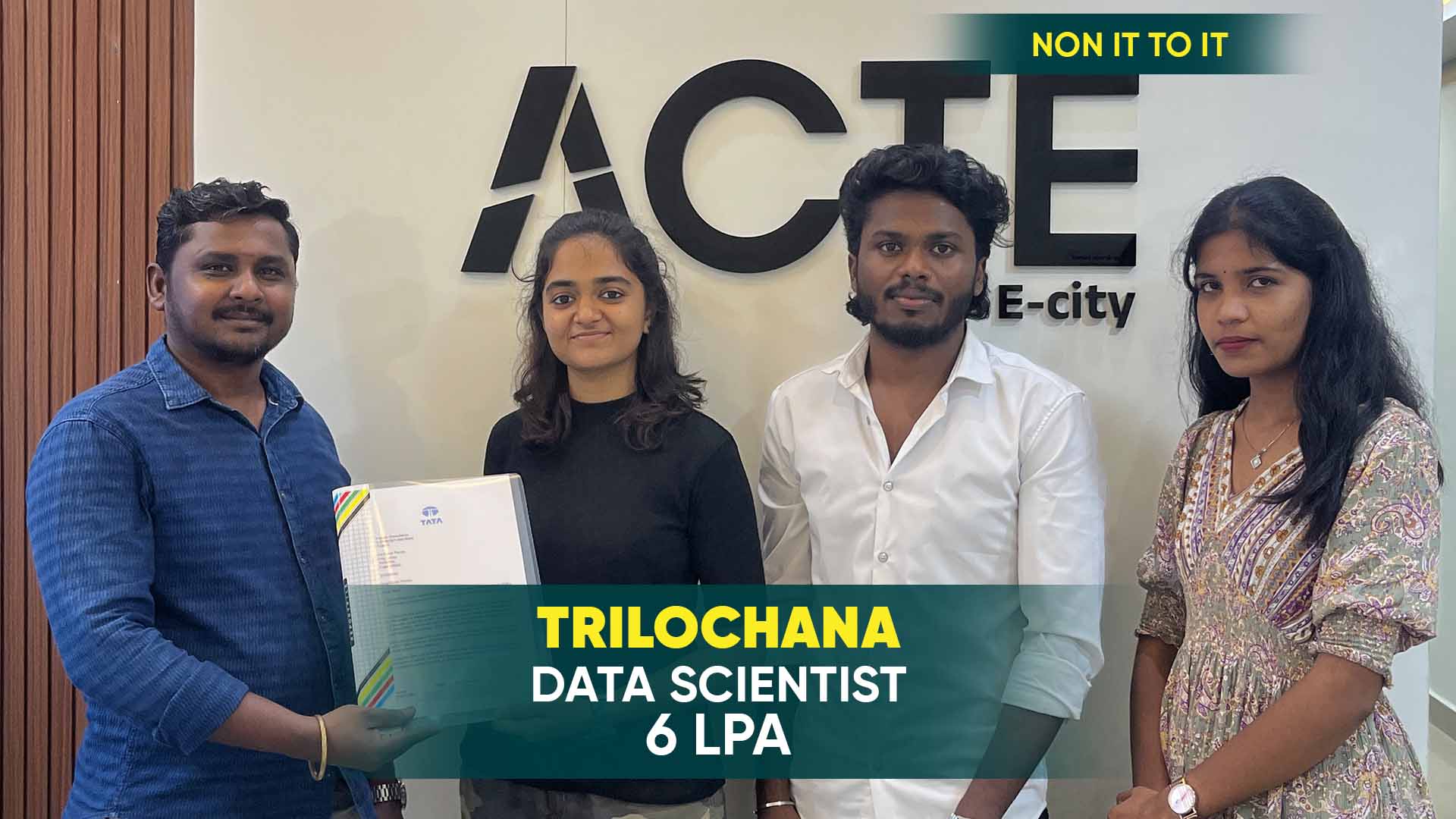

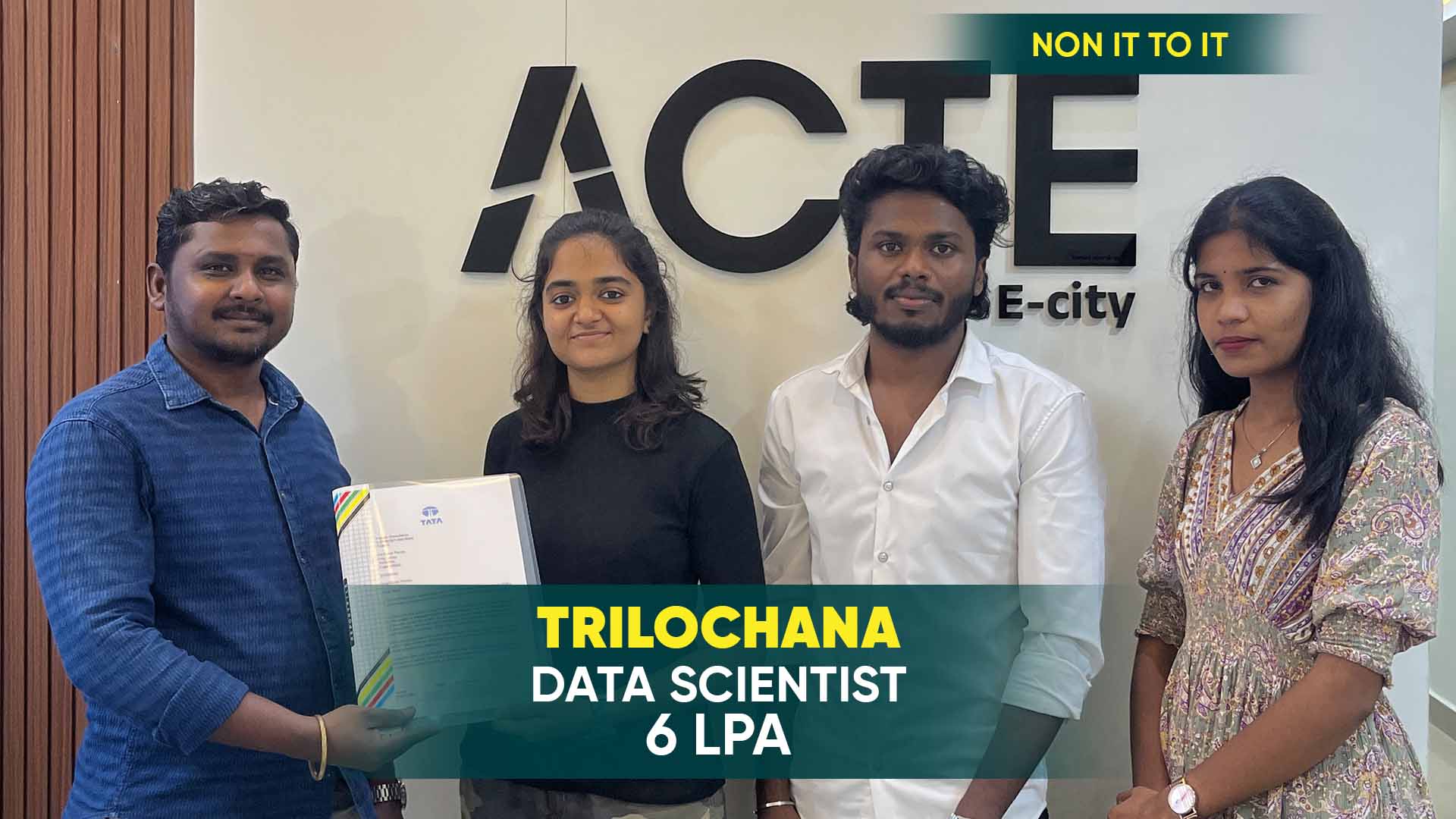

550+ Students Placed Every Month Be The Next!

Our Hiring Partners

Curriculum Designed By Experts

Expertly designed curriculum for future-ready professionals.

Industry Oriented Curriculum

An exhaustive curriculum designed by our industry experts which will help you to get placed in your dream IT company

-

30+ Case Studies & Projects

-

9+ Engaging Projects

-

10+ Years Of Experience

Hadoop Training Projects

Become a Hadoop Expert With Practical and Engaging Projects.

- Practice essential Tools

- Designed by Industry experts

- Get Real-world Experience

HDFS Basics

Learn the fundamentals of Hadoop Distributed File System (HDFS) by creating a simple file storage application that stores and retrieves data across multiple nodes in a Hadoop cluster.

Word Count using MapReduce

Implement the classic word count program using MapReduce to understand how Hadoop processes large datasets. This project will teach you the core concepts of mapping and reducing in distributed computing.

Basic Data Analysis with Hive

Perform simple queries and analysis on large datasets stored in HDFS using Apache Hive. Gain hands-on experience with Hive's SQL-like query language and data warehousing capabilities.

Data Processing with Pig

Use Apache Pig to process and analyze large datasets with a high-level data flow language. This project will focus on writing Pig scripts for data transformations and analysis.

Cluster Setup and Data Management

Set up a small Hadoop cluster using virtual machines or cloud platforms, and learn how to manage, monitor, and troubleshoot the cluster's performance and resource allocation.

Log File Processing with MapReduce

Design a project that processes log files from web servers or applications. Use MapReduce to filter and analyze logs, extracting useful insights such as error rates or user activities.

Real-Time Analytics with Apache Spark

Implement real-time data analytics using Apache Spark for processing large streams of data at scale. This project covers Spark’s capabilities with batch and streaming data processing.

Hadoop Security Implementation

Work on securing a Hadoop cluster by implementing Kerberos authentication and configuring data encryption, ensuring data security and privacy in large-scale distributed environments.

Predictive Analytics using Machine Learning

Leverage Hadoop and Spark to process data for predictive analytics. Build a machine learning model for real-time data analysis, such as predicting customer churn or sales forecasts.

Career Support

Placement Assistance

Exclusive access to ACTE Job portal

Mock Interview Preparation

1 on 1 Career Mentoring Sessions

Career Oriented Sessions

Resume & LinkedIn Profile Building

Key Features

Practical Training

Global Certifications

Flexible Timing

Trainer Support

Study Material

Placement Support

Mock Interviews

Resume Building

Upcoming Batches

What's included

Free Aptitude and

Technical Skills Training

Free Aptitude and

Technical Skills Training

- Learn basic maths and logical thinking to solve problems easily.

- Understand simple coding and technical concepts step by step.

- Get ready for exams and interviews with regular practice.

Hands-On Projects

Hands-On Projects

- Work on real-time projects to apply what you learn.

- Build mini apps and tools daily to enhance your coding skills.

- Gain practical experience just like in real jobs.

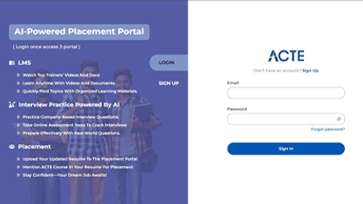

AI Powered Self

Interview Practice Portal

AI Powered Self

Interview Practice Portal

- Practice interview questions with instant AI feedback.

- Improve your answers by speaking and reviewing them.

- Build confidence with real-time mock interview sessions.

Interview Preparation

For Freshers

Interview Preparation

For Freshers

- Practice company-based interview questions.

- Take online assessment tests to crack interviews

- Practice confidently with real-world interview and project-based questions.

LMS Online Learning

Platform

LMS Online Learning

Platform

- Explore expert trainer videos and documents to boost your learning.

- Study anytime with on-demand videos and detailed documents.

- Quickly find topics with organized learning materials.

- Learning strategies that are appropriate and tailored to your company's requirements.

- Live projects guided by instructors are a characteristic of the virtual learning environment.

- The curriculum includes of full-day lectures, practical exercises, and case studies.

Hadoop Training Overview

Goals Achieved in Hadoop Training in Chennai

In a Hadoop training course in Chennai, students gain a strong understanding of big data processing using the Hadoop ecosystem. The training covers key components such as HDFS, MapReduce, YARN, Hive, Pig, and Spark. The course focuses on data storage, distributed computing, and real-time data processing. By the end of the program, students will have practical skills in building scalable data solutions, optimizing Hadoop clusters, and integrating big data technologies. They will also improve their problem-solving and analytical abilities, preparing them for roles in data engineering, analytics, and big data management.

Future Trends for Hadoop Course in Chennai

- Cloud Integration : Future courses will focus on deploying and managing Hadoop on cloud platforms like AWS, Azure, and Google Cloud.

- Real-Time Data Processing : With the growing demand for real-time analytics, more emphasis will be placed on tools like Apache Spark and Apache Kafka.

- AI and Machine Learning : Hadoop courses will incorporate AI and machine learning techniques for analyzing big data and building predictive models.

- Data Security and Governance : As data privacy regulations increase, future training will prioritize Hadoop security best practices, including encryption and compliance with global standards.

New Hadoop Course Frameworks

In recent years, several new frameworks and tools have emerged in the Hadoop ecosystem. Apache Spark has become a popular tool for in-memory data processing, offering faster performance than MapReduce. Apache Kafka is gaining traction for real-time data streaming, while Apache Flume and Apache Sqoop are increasingly integrated for data ingestion. New courses now also include training on Apache NiFi for data flow automation and Hadoop on Cloud Platforms like AWS and Azure for cloud-based data solutions. These updates help students stay on top of cutting-edge technologies for efficient data processing and storage.

Trends and Techniques Used in Hadoop Placement in Chennai

- Portfolio Development: Focus is placed on building a strong portfolio showcasing Hadoop projects, data pipelines, and performance optimizations to attract recruiters.

- Industry Collaboration: Training institutes often collaborate with tech companies to offer internships, providing students hands-on experience in the field.

- Mock Interviews and Resume Preparation: Regular mock interview sessions and resume-building workshops are held to simulate real-world job interviews and prepare students for placement.

- Focus on Advanced Big Data Technologies: Students are trained on emerging tools like Apache Spark, Apache Kafka, and cloud services, ensuring they are equipped for high-demand roles in big data development.

Hadoop Course Uses

The Hadoop course equips individuals with the knowledge and skills to pursue careers in data engineering, data analytics, and big data solutions. By mastering Hadoop's ecosystem and related tools, students are prepared to build scalable data pipelines, manage Hadoop clusters, and process large volumes of data. The course opens up opportunities in industries such as IT, finance, healthcare, and e-commerce, where big data is central to decision-making and business intelligence. Graduates can work as Hadoop developers, data engineers, big data analysts, and system administrators. Additionally, with the growing use of Hadoop in cloud environments, opportunities in cloud-based data engineering and management are on the rise.

Career Opportunities After Hadoop Training

Hadoop Developer

A Hadoop Developer is responsible for designing, developing, and maintaining big data applications using the Hadoop ecosystem.They write complex MapReduce programs, work with HDFS for data storage.

BI Developers

BI Developers work with big data technologies to design and implement data analysis tools that provide business insights. They use Hadoop and related frameworks like Hive and Impala to manage large datasets.

Data Engineers

Data Engineers in the Big Data space are responsible for creating and managing large-scale data processing systems. They design and implement data pipelines that collect, process, and store data in Hadoop clusters.

Data Analyst

A Hadoop Data Analyst extracts valuable insights from massive datasets stored in Hadoop. They use tools like Hive, Impala, and Spark SQL to write queries, analyze trends, and create data visualizations.

Machine Learning Engineer

A Machine Learning Engineer specializing in Hadoop works on AI-driven solutions that require large-scale data processing. They use Hadoop and Spark to clean, preprocess, and analyze training data.

Docker Curriculum Developer

A Cloud Data Engineer focuses on implementing Hadoop-based solutions in cloud environments like AWS, Azure, or Google Cloud. They work with cloud-native services such as AWS EMR, Google Cloud Dataproc.

Skill to Master

HDFS Management

Hadoop Ecosystem Understanding

MapReduce Programming

Data Ingestion with Sqoop and Flume

Apache Pig Scripting

Apache Spark for Real-Time Processing

Apache Hive & SQL Queries

Hadoop Cluster Management

Cloud Integration with Hadoop

Data Security & Access Control

NoSQL Databases & Hadoop Integration

Problem-Solving and Debugging

Tools to Master

Apache Hadoop

Apache Spark

Apache Pig

Apache Hive

Apache HBase

Apache Flume

Apache Spark

Apache Sqoop

YARN

Cloudera Manager

Apache Ambari

Learn from certified professionals who are currently working.

Training by

Sundar, having 7 years of experience

Specialized in: Hadoop Architecture, Big Data Processing, Distributed Computing, and Cloud Data Solutions.

Note: Sundar is known for his in-depth knowledge of Hadoop cluster management and big data infrastructure. He has helped multiple organizations optimize their data pipelines and performance.

Premium Training at Best Price

Affordable, Quality Training for Freshers to Launch IT Careers & Land Top Placements.

What Makes ACTE Training Different?

Feature

ACTE Technologies

Other Institutes

Affordable Fees

Competitive Pricing With Flexible Payment Options.

Higher Fees With Limited Payment Options.

Industry Experts

Well Experienced Trainer From a Relevant Field With Practical Training

Theoretical Class With Limited Practical

Updated Syllabus

Updated and Industry-relevant Course Curriculum With Hands-on Learning.

Outdated Curriculum With Limited Practical Training.

Hands-on projects

Real-world Projects With Live Case Studies and Collaboration With Companies.

Basic Projects With Limited Real-world Application.

Certification

Industry-recognized Certifications With Global Validity.

Basic Certifications With Limited Recognition.

Placement Support

Strong Placement Support With Tie-ups With Top Companies and Mock Interviews.

Basic Placement Support

Industry Partnerships

Strong Ties With Top Tech Companies for Internships and Placements

No Partnerships, Limited Opportunities

Batch Size

Small Batch Sizes for Personalized Attention.

Large Batch Sizes With Limited Individual Focus.

LMS Features

Lifetime Access Course video Materials in LMS, Online Interview Practice, upload resumes in Placement Portal.

No LMS Features or Perks.

Training Support

Dedicated Mentors, 24/7 Doubt Resolution, and Personalized Guidance.

Limited Mentor Support and No After-hours Assistance.

We are proud to have participated in more than 40,000 career transfers globally.

HadoopCertification

Hadoop certifications offer several advantages, including improved job prospects, enhanced technical expertise in big data processing, and recognition from industry leaders.

Yes, there are several Hadoop course certifications available, each targeting different skill levels and expertise. Some well-known certifications include the Cloudera Certified Associate (CCA) Hadoop Developer, Hortonworks Certified Apache Hadoop Administrator, and the MapR Certified Data Analyst.

Yes, many Hadoop certifications offer online exams. These exams are typically proctored to ensure integrity and can be taken from the comfort of your home or office. Online exams for Hadoop certifications usually consist of multiple-choice questions, practical tasks.

Yes, earning a Hadoop certification and placement with ACTE can be a valuable investment in your career. The training programs offer industry-relevant content, expert instructors, and hands-on experience with the Hadoop ecosystem, making you well-prepared for real-world big data roles.

Frequently Asked Questions

- ACTE offers both classroom and online training options for Hadoop, providing flexibility for students to choose the learning mode that best suits their schedule and preferences.

- Whether you prefer face-to-face interaction or the convenience of online sessions, ACTE ensures quality training and hands-on experience in both formats.

- The duration of the Hadoop course typically ranges from 6 to 8 weeks for intensive training.

- Resume building

- Mock interviews

- Job referrals

- Ongoing placement support

- Yes, upon successful completion of the Hadoop course at ACTE, you will receive a certificate that is recognized by industry standards. While it may not be directly government-certified, the certificate is widely accepted and valued by top companies and recruiters in the field of big data and Hadoop technologies.

- Yes, the Hadoop course includes hands-on training with real-world projects. Students get to work on case studies, data processing pipelines, and large-scale analytics applications. These projects help learners understand how Hadoop is used in industries such as banking, healthcare, and e-commerce.

)